Xcp ng что это

# Guides

This section is grouping various guides regarding XCP-ng use cases.

# Reboot or shutdown a host

# General case

The proper way to reboot or shutdown a host is:

Disable the host so that no new VM can be started on this host and so that the rest of the pool knows that the host was disabled on purpose.

From command line: xe host-disable host=

Migrate the VMs running on the host to other hosts in the pool, or shut them down.

Reboot or shutdown.

Can be done from XO, or from command line: xe host-reboot|host-shutdown host=

(After a reboot or host startup) Move VMs back to the host if appropriate. There is no need to re-enable the host: it is done automatically when it starts.

# With «agile» VMs

If all your VMs are «agile», that is, they’re not tied to local storage or local devices (device pass-through), and if there are enough resources on other hosts in the pool, the above can be simplified as:

*Put the host into maintenance mode from Xen Orchestra. This will disable the host, then evacuate its VMs automatically to other hosts.

If you prefer to do it from command line, this is equivalent to: xe host-disable host=

Reboot or shutdown

Can be done from XO, or from command line: xe host-reboot|host-shutdown host=

(After a reboot or host startup) Move VMs back to the host if appropriate. There is no need to re-enable the host: it is done automatically when it starts.

# pfSense / OPNsense VM

pfSense and OPNsense do work great in a VM, but there are a few extra steps that need to be taken first.

# 1. Create VM as normal.

# 2. Install Guest Utilities

There are 2 ways of doing that, either using the CLI (pfSense or OPNsense) or the Web UI (pfSense).

Option 1 via console/ssh: Now that you have the VM running, we need to install guest utilities and tell them to run on boot. SSH (or other CLI method) to the VM and perform the following:

Guest Tools are now installed and running, and will automatically run on every boot of the VM.

# 3. Disable TX Checksum Offload

Now is the most important step: we must disable TX checksum offload on the virtual xen interfaces of the VM. This is because network traffic between VMs in a hypervisor is not populated with a typical Ethernet checksum, since they only traverse server memory and never leave over a physical cable. The majority of operating systems know to expect this when virtualized and handle Ethernet frames with empty checksums without issue. However pf in FreeBSD does not handle them correctly and will drop them, leading to broken performance.

The solution is to simply turn off checksum-offload on the virtual xen interfaces for pfSense in the TX direction only (TX towards the VM itself). Then the packets will be checksummed like normal and pf will no longer complain.

Disabling checksum offloading is only necessary for virtual interfaces. When using PCI Passthrough

(opens new window) to provide a VM with direct access to physical or virtual (using SR-IOV

(opens new window) ) devices it is unnecessary to disable TX checksum offloading on any interfaces on those devices.

Many guides on the internet for pfSense in Xen VMs will tell you to uncheck checksum options in the pfSense web UI, or to also disable RX offload on the Xen side. These are not only unnecessary, but some of them will make performance worse.

# Using Xen Orchestra

# Using CLI

SSH to dom0 on your XCP-NG hypervisor and run the following:

First get the UUID of the VM to modify:

Find your pfSense / OPNsense VM in the list, and copy the UUID. Now stick the UUID in the following command:

This will list all the virtual interfaces assigned to the VM:

For each interface, you need to take the UUID (the one at the top labeled uuid ( RO) ) and insert it in the xe vif-param-set uuid=xxx other-config:ethtool-tx=»off» command. So for our two virtual interfaces the commands to run would look like this:

That’s it! For this to take effect you need to fully shut down the VM then power it back on. Then you are good to go!

If you ever add more virtual NICs to your VM, you will need to go back and do the same steps for these interfaces as well.

# XCP-ng in a VM

This page details how to install XCP-ng as a guest VM inside different hypervisors to test the solution before a bare-metal installation.

This practice is not recommended for production, nested virtualization has only tests/labs purpose.

Here is the list of hypervisors on which you can try XCP-ng :

# Nested XCP-ng using XCP-ng

# Nested XCP-ng using Xen

It’s a pretty standard HVM, but you need to use a vif of ioemu type. Check this configuration example:

# Nested XCP-ng using VMware (ESXi and Workstation)

The following steps can be performed under VMware Workstation Pro, the settings will remain the same but the configuration will be slightly different. We will discuss this point at the end of this section about VMware.

# Networking settings

The first step, and without a doubt the most important step, will be to modify the virtual network configuration of our ESXi host. Without this configuration, the network will not work for your virtual machines running on your nested XCP-ng.

Start by going to the network settings of your ESXi host.

Then select the port group on which your XCP-ng virtual machine will be connected. By default, this concerns the vSwitch0 and the ‘VM Network‘ group port.

Click on the «Edit Settings» button to edit the parameters of this port group.

Here is the default settings :

Click on the Accept checkbox for Promiscuous mode.

Save this settings by using the Save button at the bottom of the window.

A little explanation from the VMware documentation website : Promiscuous mode under VMware ESXi

These settings can be done at the vSwitch itself (same configuration menu). By default, the group port inherits the vSwitch settings on which it is configured. It all depends on the network configuration you want to accomplish on your host.

# XCP-ng virtual machine settings

Once your host’s network is set up, we’ll look at configuring the XCP-ng virtual machine.

Create a virtual machine and move to the «Customize settings» section. Here a possible virtual machine configuration :

Then edit the CPU settings and check the «Expose hardware assisted virtualization to the guest OS» box in the «Hardware Virtualization» line.

Enable virtualized CPU performance counters can be checked if necessary : VMware CPU Performance Counters

For the other virtual machine settings, some explanations :

Finally, install XCP-ng as usual, everything should work as expected. After installation, your XCP-ng virtual machine is manageable from XCP-ng Center or Xen Orchestra.

You can then create a virtual machine and test how it works (network especially).

# Configuration under VMware Workstation Pro 14/15

Create a XCP-ng virtual machine like in ESXi.

Check the following CPU setting : Virtualize Intel VT-x/EPT or AMD-V/RVI

hypervisor.cpuid.v0 = «FALSE» : Addition to the checked CPU option on Workstation ethernet0.noPromisc = «FALSE» : Enable Promiscuous Mode

Replace ethernet0.virtualDev = «e1000» by ethernet0.virtualDev = «vmxnet3»

Check if the virtual machine correctly works by trying to connect using XCP-ng Center and by creating a virtual machine on your nested XCP-ng.

The following steps can be performed with Hyper-V on Windows 10 (version 1607 minimum) and Windows Server 2016 (Hyper-V Server also). The settings will remain the same for both OS.

This feature is not available with Windows 8 and Windows Server 2012/2012 R2 AND an Intel CPU is required (AMD not supported yet).

Unlike VMware, you must first create the virtual machine to configure nested virtualization. Indeed, under Hyper-V, the configuration of the nested virtualization is a parameter to be applied to the virtual machine, it is not a global configuration of the hypervisor.

# XCP-ng virtual machine settings

# CPU and Network settings

Once the virtual machine is created, it is possible to enable nested virtualization for this virtual machine. Open a PowerShell Administrator prompt :

Then, it will be about configuring the network to allow guest virtual machines to access to the outside network.

Important : This settings has to be applied even if you use the NAT Default Switch (since Windows 10 1709)

After these configurations, you should be able to manage this XCP-ng host from XCP-ng Center or from a Xen Orchestra instance.

# Nested XCP-ng using QEMU/KVM

The following steps can be performed using QEMU/KVM on a Linux host, Proxmox or oVirt.

Like VMware, you must first enable the nested virtualization feature on your host before creating your XCP-ng virtual machine.

# Configure KVM nested virtualization (Intel)

Check if your CPU support virtualization and EPT (Intel)

On most Linux distributions :

If everything is OK, you can check if the nested virtualization is already activated.

If the command returns «Y», nested virtualization is activated, if not, you should activate it (next steps).

Firstly, check if you don’t have any virtual machine running. Then, unload the KVM module using root user or sudo :

Activate nested virtualization feature :

# modprobe kvm_intel nested=1

Nested virtualization is enabled until the host is rebooted. To enable it permanently, add the following line to the /etc/modprobe.d/kvm.conf file:

options kvm_intel nested=1

# Configure KVM nested virtualization (AMD)

# XCP-ng virtual machine settings

The configuration of the virtual machine will use mostly emulated components for disks and network. VirtIO drivers are not include in the XS/XCP-ng kernel.

# VLAN Trunking in a VM

This document will describe how to configure a VLAN trunk port for use in a VM running on xcp-ng. The typical use case for this is you want to run your network’s router as a VM and your network has multiple vlans.

With some help from others in the forums

(opens new window) and so this document will discuss how to implement this solution using pfSense as your router. In theory, the same solution should apply to other router solutions, but it is untested. Feel free to update this document with your results.

# Two Approaches

There are two approaches to vlans in xcp-ng. The first is to create a vif for each VLAN you want your router to route traffic for then attach the vif to your VM. The second is to pass through a trunk port from dom0 onto your router VM.

# Multiple VIFs

By far, this is the easiest solution and perhaps the «officially supported» approach for xcp-ng. When you do this, dom0 handles all the VLAN tagging for you and each vif is just presented to your router VM as a separate virtual network interface. It’s like you have a bunch of separate network cards installed on your router where each represents a different VLAN and is essentially attached to a VLAN access (untagged) port on your switch. There is nothing special for you to do, this just works. If you require 7 vifs or less for your router then this might be the easiest approach.

The problem with this approach is when you have many vlans you want to configure on your router. If you read through the thread I linked to at the top of this page you’ll notice the discussion about where I was unable to attach more than 7 vifs to my pfSense VM. XO nor XCP-ng Center allow you to attach more than seven. This appears to be some kind of limit somewhere in Xen. Other users have been able to attach more than 7 vifs via CLI, however when I tried to do this myself my pfSense VM became unresponsive once I added the 8th vif. More details on that problem are discussed in the thread.

Another problem with this approach, perhaps only specific to pfSense, is that when you attach many vifs, you must disable TX offloading on each and every vif otherwise you’ll have all kinds of problems. This was definitely a red flag for me. Initially I’m starting with 7 vlans and 9 networks total with short term requirements for at least another 3 vlans for sure and then who knows how many down the road. In this approach, every time you have to create a new VLAN by adding a vif to the VM, you will have to reboot the VM.

Having to reboot my network’s router every time I need to create a new VLAN is not ideal for the environment I’m working in; especially because in the current production environment running VMware, we do not need to reboot the router VM to create new vlans. (FWIW, I’ve come to xcp-ng as the IT department has asked me to investigate possibly replacing our VMware env with XCP-ng. I started my adventures with xcp-ng by diving in head first at home and replacing my home environment, previously ESXi, with xcp-ng. Now I’m in the early phases of working with xcp-ng in the test lab at work.)

In conclusion, if you have seven or fewer vifs and you’re fairly confident that you’ll never need to exceed seven vifs then this approach is probably the path of least resistance. On the other hand, if you know you’ll need more than seven or fairly certain you will some day. Or you’re in an environment where you need to be able to create vlans on the fly then you’ll probably want to proceed with the alternative below.

This document is about the alternative approach, but a quick summary of how this solution works in xcp-ng:

(opens new window) will fully explain all of the config changes required when running pfSense in xcp-ng.

# Adding VLAN Trunk to VM

The alternative approach involves attaching the VLAN trunk port directly to your router VM, and handling the VLANs in pfSense directly. This has the biggest advantage of not requiring a VM reboot each time you need to setup a new VLAN. However note you will need to manually edit a configuration file in pfSense every time it is upgraded. The physical interface you are using to trunk VLANs into the pfSense VM should also not be the same physical interface that your xcp-ng management interface is on. This is because one of the steps required is setting the physical interface MTU to 1504, and this will potentially cause MTU mismatches if xen is using this same physical interface for management traffic (1504-byte sized packets being sent from the xen management interface to your MTU 1500 network).

The problem we face with this solution is that, at least in pfSense, the xn driver used for the paravirtualization in FreeBSD does not support 802.1q tagging. So we have to account for this ourselves both in dom0 and in the pfSense VM. Once you’re aware of this limitation, it actually isn’t a big deal to get it all working but it just never occurred to me that a presumably relatively modern network driver would not support 802.1q.

Anyway, the first step is to modify the MTU setting of the pif that is carrying your tagged vlans into the xcp-ng server from 1500 to 1504. The extra 4 bytes is, of course, the size of the VLAN tagging within each frame. Warning: You’re going to have to detach or shutdown any VMs that are currently using this interface. For this example, let’s say it’s eth1 that is the pif carrying all our tagged traffic.

Once this is done, attach a new vif to your pfSense VM and select eth1 as the network. This will attach the VLAN trunk to pfSense. Boot up pfSense and disable TX offloading, etc. on the vif, reboot as necessary then login to pfSense.

Configure the interface within pfSense by also increasing the MTU value to 1504. Again, the xn driver does not support VLAN tagging, so we have to deal with it ourselves. NOTE: You only increase the MTU on the parent interface only in both xcp-ng and pfSense. The MTU for vlans will always be 1500.

Finally, along the same lines, since the xn driver does not support 802.1q, pfSense will not allow you to create vlans on any interface using the xn driver. We have to modify pfSense to allow us to do this.

From a shell in pfSense, edit /etc/inc/interfaces.inc and modify the is_jumbo_capable function at around line 6761. Edit it so it reads like so:

This modification is based on pfSense 2.4.4p1, ymmv. However, I copied this mod from here

Keep in mind that you will need to reapply this mod anytime you upgrade pfSense.

That’s it, you’re good to go! Go to your interfaces > assignments in pfSense, select the VLANs tab and create your vlans. Everything should work as expected.

# Links/References

# TLS certificate for XCP-ng

After installing XCP-ng, access to xapi via XCP-ng Center or XenOrchestra is protected by TLS with a self-signed certificate

(opens new window) : this means that you have to either verify the certificate signature before allowing the connection (comparing against signature shown on the console of the server), either work on trust-on-first-use basis (i.e. assume that the first time you connect to the server, nobody is tampering with the connection).

If you would like to replace this certificate by a valid one, either from an internal Certificate Authority or from a public one, you’ll find here some indications on how to do that.

Note that if you use an non-public certificate authority and XenOrchestra, you have additional configuration to specify on XenOrchestra side

This indication is valid for XCP-ng up to v8.1. Version 8.2 is expected to improve deployment of new certificates, like Citrix did for XenServer 8.2

# Generate certificate signing request

You can use the auto-generated key to create a certificate signing request :

# Install the certificate chain

Then, you have to restart xapi :

# Dom0 memory

Dom0 is another word to talk about the privileged domain, also known as the Control Domain.

Issues can arise when the control domain is lacking memory, that’s why we advise to be generous with it whenever possible. Default values from the installer may be too low for your setup. In general it depends on the amount of VM’s and their workload. If constraints do not allow you to follow the advice below, you can try to set lower values.

# Recommended values

Note: If you use ZFS, assign at least 16GB RAM to avoid swapping. ZFS (in standard configuration) uses half the Dom0 RAM as cache!

# Current RAM usage

You can use htop to see how much RAM is currently used in the dom0. Alternatively, you can have Netdata to show you past values.

# Change dom0 memory

Example with 4 GiB:

Do not mess the units and make sure to set the same value as base value and as max value.

# Autostart VM on boot

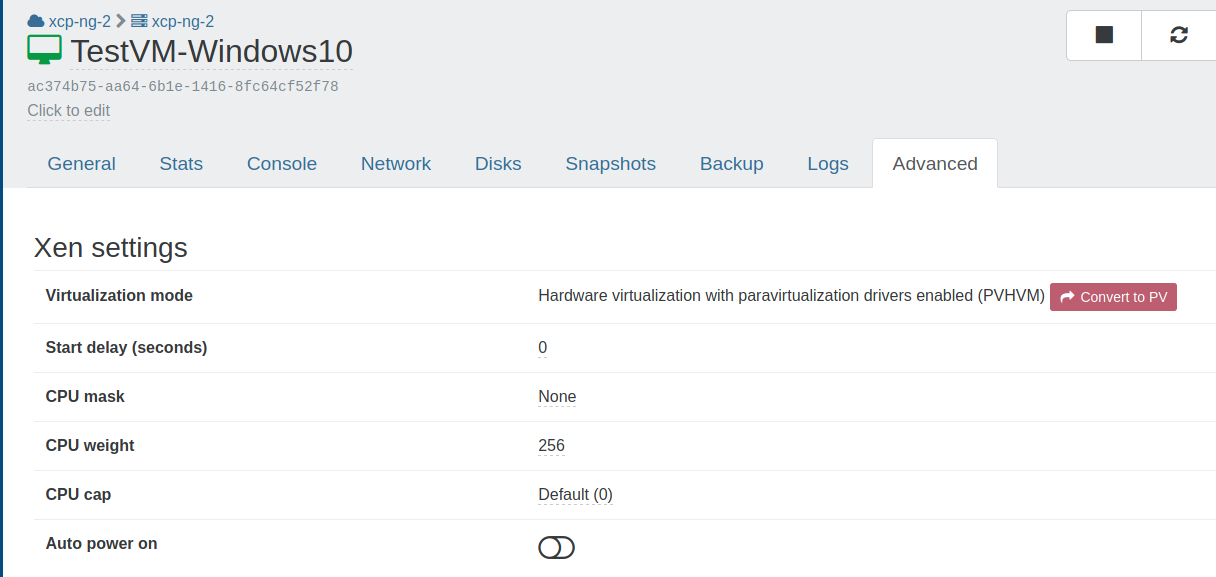

A VM can be started at XCP-ng boot itself, it’s called Auto power on. We have two ways to configure it: using Xen Orchestra or via the CLI.

# With Xen Orchestra

In Xen Orchestra we can just enable a toggle in VM «Advanced» view, called Auto power on. Everything will be set accordingly.

# With the CLI

Now we enable autostart at the virtual machine level. 3. Execute the command to get the UUID of the virtual machine:

Enable autostart for each virtual machine with the UUID found: # xe vm-param-set uuid= other-config:auto_poweron=true

Checking the output # xe vm-param-list uuid= | grep other-config

# Software RAID Storage Repository

XCP-ng has support for creating a software RAID for the operating system but it is limited to RAID level 1 (mirrored drives) and by the size of the drives used. It is strictly intended for hardware redundancy and doesn’t provide any additional storage beyond what a single drive provides.

These instructions describe how to add more storage to XCP-ng using software RAID and show measures that need to be taken to avoid problems that may happen when booting. You should read through these instructions at least once to become familiar with them before proceeding and to evaluate whether the process fits your needs. Look at the «Troubleshooting» section of these instructions to get some idea of the kinds of problems that can happen.

An example installation is described below using a newly installed XCP-ng software RAID system. This covers only one specific possibility for software RAID. See the «More and Different» section of these instructions to see other possibilities.

In addition, the example presented below is a fresh installation and not being installed onto a production system. The changes described in the instructions can be applied to a production system but, as with any system changes, there is always a risk of something going badly and having some data loss. If performing this on a production system, make sure that there are good backups of all VMs and other data on the system that can be restored to this system or even a different one in case of problems.

These instructions assume you are starting with a server already installed with software RAID and have no other storage repositories defined except what may be on the existing RAID.

# Example System

The example system we’re demonstrating here is a small server using 5 identical 1TB hard drives. XCP-ng has already been installed in a software RAID configuration using 2 of the 5 drives. There is already a default «Local storage» repository configured as part of the XCP-ng setup on the existing RAID 1 drive pair.

Before starting the installation, all partitions were removed from the drives and the drives were overwritten with zeroes.

So before starting out, here is an overview of the already configured system:

The 5 drives are in place as sda through sde and as can be seen from the list are exactly the same size. The RAID 1 drive pair is set up as the XCP-ng default of a partitioned RAID 1 array md127 using drives sda and sdb and is in a healthy state.

# Building the Second RAID

Here, we’ve made sure to use the assume-clean and metadata=1.2 options. The assume-clean option prevents the RAID assembly process from initializing the content of the parity blocks on the drives which saves a lot of time when assembling the RAID for the first time.

The metadata=1.2 option forces the RAID array metadata to a position close to the beginning of each drive in the array. This is most important for RAID 1 arrays but useful for others and prevents the component drives of the RAID array from being confused for separate individual drives by any process that tries to examine the drives for automatic mounting or other use.

Checking the status of the drives in the system should show the newly added RAID array.

# Building the Storage Repository

Now we create a new storage repository on the new RAID array like this:

What we have now is a second storage repository named «RAID storage» using thin-provisioned EXT filesystem storage. It will show up and can be used within Xen Orchestra or XCP-ng Center and should behave like any other storage repository.

At this point, we’d expect that the system could just be used as is, virtual machines stored in the new RAID storage repository and that we can normally shut down and restart the system and expect things to work smoothly.

Unfortunately, we’d be wrong.

# Unstable RAID Arrays When Booting

What really happens when XCP-ng boots with a software RAID is that code in the Linux kernel and in the initrd file will attempt to find and automatically assemble any RAID arrays in the system. When there is just the single md127 RAID 1 array, the process works pretty well. Unfortunately, the system seems to occasionally break down where there are more drives, more arrays, and more complex arrays.

This causes several problems in the system, mainly due to the system not correctly finding and adding all component drives to each array or not starting arrays which do not have all components added but could otherwise start successfully.

A good example here would be the md0 RAID 5 array we just created. Rebooting the system in the state it is in now will often or even usually work without problems. The system will find both drives of the md127 RAID 1 boot array and all three drives of the md0 RAID 5 storage array, assemble the arrays and start them running.

Sometimes what happens is that the system either does not find all of the parts of the RAID or does not assemble them correctly or does not start the array. When that happens the md0 storage array will not start and looking at the /proc/mdstat array status will show the array as missing one or two of the three drives or will show all three drives but not show them as running. Another common problem is that the array is assembled with enough drives to run, two out of three drives in our case, but does not start. This can also happen if the array has a failed drive at boot even if there are enough remaining drives to start and run the array.

This can also happen to the md127 boot array where it will show with only one of the two drives in place and running. If it does not start and run at all, we will fail to get a normal boot of the system and likely be tossed into an emergency shell instead of the normal boot process. This is usually not consistent and another reboot will start the system. This can even happen when the boot RAID is the only RAID array in the system but fortunately that rarely happens.

So what can we do about this? Fortunately, we can give the system more information about what RAID arrays are in the system and specify that they should be started up at boot.

# Stabilizing the RAID Boot Configuration: The mdadm.conf File

The mdadm.conf file created for this system is here:

Each system and array will have different UUID identifiers so the numbers we have here are specific to this example. The UUID identifiers here will not work for another system. For each system, we’ll need a way to get them to include in the mdadm.conf file. The best way is using the mdadm command itself while the arrays are running like this:

Notice that this is output in almost exactly the same format as shown in the mdadm.conf file above. The UUID numbers are important and we’ll need them again later.

If we don’t want to type in the entire file, we can create the file like this.

And then edit the file to change the format of the array names from /dev/md/0 to /dev/md0 and remove the name= parameters from each line. This isn’t strictly necessary but keeps the array names in the file consistent with what is reported in /proc/mdstat and /proc/partitions and avoids giving each array another name (in our case those names would be localhost:127 and XCP-ng:0 ).

So what do these lines do? The first line instructs the system to allow or attempt automatic assembly for all arrays defined in the file. The second specifies to report errors in the system by email to the root user. The third is a list of all drives in the system participating in RAID arrays. Not all drives need to be specified on a single DEVICE line. Drives can be split among multiple lines and we could even have one DEVICE line for each drive. The last two are descriptions of each array in the system.

This file gives the system a description of what arrays are configured in the system and what drives are used to create them but doesn’t specify what to do with them. The system should be able to use this information at boot for automatic assembly of the arrays. Booting with the mdadm.conf file in place is more reliable but still runs into same problems as before.

# Stabilizing the RAID Boot Configuration: The initrd Configuration

The other thing we need to do is give the system some idea of what to do with the RAID arrays at boot time. The way to do this is by adding instructions for the dracut program creating the initrd file to enable all RAID support, use the mdadm.conf file we created, and to start the arrays at boot time.

We can specify additional command line parameters to the dracut command which creates the initrd file to ensure that kernel RAID modules are loaded, the mdadm.conf file is used and so on but there are a lot of them. In addition, we would have to manually specify the command line parameters every time a new initrd file is built or rebuilt. Any time dracut is run any other way such as automatically as part of applying a kernel update, the changes specified manually on the command line would be lost. A better way to do it is to create a list of parameters that will be used automatically by dracut every time it is run to create a new initrd file.

We create a new file dracut_mdraid.conf in that folder that looks like this:

The first set instructs dracut to consider the mdadm.conf file we created earlier and also to include a copy of it in the initrd file, add dracut support for mdraid, include the kernel modules for mdraid support, and specifically support the two RAID devices by name.

The second set instructs the booting Linux kernel to support automatic RAID assembly, support mdraid and the mdraid configuration and also to search for and start the two RAID arrays via their UUID identifiers. These are the same UUID identifiers that we included in the mdadm.conf file and, like the UUID identifiers there, are specific to each array and system.

Something to note when creating the file is to allow extra space between command line parameters. That is why most of the lines have extra space before and after parameters within the quotes.

# Building and Testing the New initrd File

Now that we have all of this extra configuration, we need to get the system to include it for use at boot. To do that we use the dracut command to create a new initrd file like this:

This creates a new initrd file with the correct name matching the name of the Linux kernel and prints a list of modules included in the initrd file. Printing the list isn’t necessary but is handy to see that dracut is making progress as it runs.

When the system returns to the command line, it’s time to test. We’ll reboot the system from the console or from within Xen Orchestra or XCP-ng Center. If all goes well, the system should boot normally and correctly find and mount all 5 drives into the two RAID arrays. The easiest way to tell that is looking at the /proc/mdstat file.

We can see that both arrays are active and healthy with all drives accounted for. Examining the storage repositories using Xen Orchestra, XCP-ng, or xe commands shows that both the Local storage and RAID storage repositories are available.

# Troubleshooting

The most common problems in this process stem from one of a few things.

One common cause of problems is using the wrong type of drives. Just like when using drives for installation of XCP-ng, it is important to use drives that either have or emulate 512 byte disk sectors. Drives that use 4K byte disk blocks will not work unless they are 512e disks which emulate having 512 byte sectors. It is generally not a good idea to mix types of drives such as one 512n (native 512 byte sectors) and two 512e drives but should be possible to do in an emergency.

The second is that the drives were not empty before including them into the system. If there are any traces of RAID configuration or file systems on the drives, we could have problems with interference between those and the new configurations we’re creating on the drives when creating the RAID array or the EXT filesystem (or LVM if you use that for the storage array).

The way to avoid this problem is to make sure the drives are thoroughly wiped before starting the process. This can be done from the command line with the dd command like this:

This writes zeroes to every block on the drive and will wipe any traces of previous filesystems or RAID configurations.

If the drive is added to the RAID array correctly, we should start to see a lot of disk activity and we should be able to monitor the progress of it by looking at the /proc/mdstat file.

If the drive will not add to the array due to something left over on the drive, we should get an error from mdadm indicating the problem. In that case we should be able to use the dd command to wipe out the one drive as above and then attempt to add it into the array.

The other possibility is that the RAID array is created correctly but XCP-ng will not create a storage repository on it because some previous content of the drives is causing a problem. It should be possible to recover from this by writing zeroes to the entire array without needing to rebuild it like this:

After the probably very lengthy process of zeroing out the array, it should be possible to try again to create a storage repository on the RAID array.

Another common cause for problems is a problem with either the mdadm.conf or dracut_mdraid.conf files. Often when there is a problem with one of those files, the system will boot but fail to assemble or start the RAID arrays. The boot RAID array will usually be found and assembled automatically but other RAID arrays may not.

In an extreme case, it should even be possible to delete and re-create those files using the normal instructions and rebuild the initrd file again. It should also be possible but slightly more risky to remove the files and re-create the initrd file then reboot and attempt to re-create the files and initrd again after rebooting.

Another possible but rare problem is caused by drives that shift their identifications from one system boot to the next. A drive that has one name such as sdf on one boot might be different such as sdc on a different boot. This is usually due to problems with the system BIOS or drivers and can also be caused by some hardware problems such as a drive taking wildly different amounts of time to start up from one boot to the next. It it also more common with some types of storage such as NVMe storage.

This type of problem is very difficult to diagnose and correct. It may be possible to resolve it using different BIOS or setup configurations in the host system or by updating BIOS or controller firmware.

# Updates and Upgrades

We will eventually need to update or patch the system to fix problems or close security holes as they are discovered.

Updates are patches that are applied to isolated parts of the system and replace or correct just the affected programs or data files. The patches are applied using the yum command from the host system’s command line or via the Xen Orchestra patches tab for a host or pool. The individual update patches should not affect either the added mdadm.conf or dracut_mdraid.conf files and any rebuild of the initrd file as part of a Linux kernel update should use the configuration from those files. In general, updates should be safe to apply without risk of affecting software RAID operation.

Upgrades made by booting from CD or the equivalent via network booting are different from updates. The upgrade process replaces the entire running system by creating a backup copy of the current system into a separate disk partition then performing a full installation from the CD and makes copies of the configuration data and files from the previous system, upgrading them as needed. As part of a full upgrade, it is likely that one or both of the added RAID configuration files will not be copied from the original system to the upgraded system.

Before installing an upgrade via CD, check the running RAID arrays and look for any problems with drives as follows:

This list shows both of the RAID arrays in the example system and shows that both are active and healthy. At this point it should be safe to shut down the host and reboot from CD to install the upgrade.

When installing the upgrade, no differences from a normal upgrade process are needed to account for either the RAID 1 boot array or the RAID 5 storage array. We should only need to ensure that the installer recognizes the previous installation and that we select an upgrade instead of an installation when prompted.

After the upgrade has finished and the host system reboots, there may be problems with recognizing one or both of the RAID arrays. It is very unlikely that there will be a problem with the md127 RAID 1 boot array with the most likely problem being the array operating with only one drive. Problems with the RAID 5 storage array are more likely but not common with the most likely problems being drives missing from the array or the array failing to activate.

Once the host system has rebooted, check whether the mdadm.conf and dracut_mdraid.conf files are still in the correct locations and have the correct contents. It is possible that one or both of the files have been retained; in a test upgrade from XCP-ng version 8.2 to version 8.2 on the example system, the mdadm.conf file was preserved as part of the upgrade while the dracut_mdraid.conf file was not.

We then copy one or both files from the original system to the correct locations in the upgraded system using one or both of the commands:

After copying the files, we unmount the original system then create a new initrd file which will contain the RAID configuration files.

If the mdadm.conf or dracut_mdraid.conf files are damaged or cannot be copied from the backup copy of the system, it should be possible to create new copies of the files by following the instructions for creating them as part of an installation then using the above dracut command to create an updated initrd file.

After the rebuilding of the initrd file has finished, it should be safe to reboot the host system. At this point, the system should start and run normally.

# More and Different

So what if we don’t have or don’t want a system that’s identical to the example we just built in these instructions? Here are some of the possible and normal variations of software RAID under XCP-ng.

# No preexisting XCP-ng RAID 1

# Different Sized and Shaped RAID Arrays

The number of drives in a specific level of RAID array can also affect the performance of the array. A good rule of thumb for RAID 5 or 6 arrays is to have a number of drives that is a power of two (2, 4, 8, etc.) plus the number of extra drives where space is used for parity information, one drive for RAID 5 and two drives for RAID 6. The RAID 5 array we created in the example system meets that recommendation by having 3 drives. A 5 drive RAID5 array, or 4 or 6 drive RAID 6 arrays would as well. A good rule of thumb for RAID 10 arrays is to have an even number of drives. For RAID 10 in Linux an even number of drives is not a requirement as it is on other types of systems. In addition, it may be possible to get better performance by creating a 2 drive RAID 10 array instead of a 2 drive RAID 1 array.

# Avoiding the RAID 5 and 6 «Write Hole»

RAID 5 and 6 arrays have a problem known as the «write hole» affecting their consistency after a failure during a disk write such as a crash or power failure. The problem happens when a chunk of RAID protected data known as a stripe is changed on the array. To make the change, the operating system reads the stripe of data, changes the portion of the data requested, recomputes the disk parity for RAID 5 or RAID 6 then rewrites the data to the disks. If a crash or power outage interrupts that process some of the data written to disk will reflect the new content of the stripe while some on other disks will reflect the old content of the stripe. In general the system may be able to detect that there is a problem by rereading the entire stripe and verifying that the parity portion does not match. The system would have no way to verify which portions of the stripe were written with new data and which contain old data so would not be able to properly reconstruct the stripe after a crash.

This problem can only happen if the system is interrupted during a write to a RAID and tends to be rare.

Generally, the best way to mitigate the problem is by avoiding it. Use good quality server hardware with known stable hardware drivers to avoid possible sources of crashes. Having good power protection such as redundant power supplies and battery backup units and using software to automatically shut down in case of a power outage will limit possible power-related problems.

If that is not enough, there are other methods to avoid the write hole by making it possible for the RAID system to recover after a crash while working around the write hole problem.

# Different Sized Drives

We might need to create a RAID array where our drives are not identical and each drive has a different number of available blocks. This might come up if we need to create a RAID array but have two of one type of drive and one of another such as two WD drives and one Seagate or two 1TB drives and one that is 1.5TB or 2TB.

The easiest solution to creating a working RAID array in this situation is to partition the drives and create a RAID array using the partitions instead of using the entire drive.

To do this, we get the sizes of the disks in the system by examining the /proc/partitions file. Starting with the smallest of the disks to be used in the array, use gdisk or sgdisk to create a single partition of type fd00 (Linux RAID) using the maximum space available. Examine and record the size of the partition created and save the changes. Repeat the process with the remaining drives to be used except use the size of the partition created on the first drive instead of the maximum space available.

This should leave you with drives that each have a single partition and all of the partitions are the same size even though the drives are not.

It should also be possible to create the partitions on the drives outside of the XCP-ng system using a bootable utility disk that contains partitioning utilities such as gparted.

# More Than One Additional Array

We then create another storage repository as we did previously making sure to give it a different name and use /dev/md1 instead of /dev/md0 in the command line.

# Guest UEFI Secure Boot

Enabling UEFI Secure Boot for guests ensures that XCP-ng VMs will only execute trusted binaries at boot. In practice, these are the binaries released by the operating system (OS) team for the OS running in the VM (Microsoft Windows, Debian, RHEL, Alpine, etc.).

Guest UEFI Secure Boot will soon be made available through an update to XCP-ng 8.2.

Until then, you can test it with:

You can provide feedback or ask questions on the dedicated forum thread

# Requirements

Note: it’s not necessary that the XCP-ng host boots in UEFI mode.

# How XCP-ng Manages the Certificates

In this guide, we often refer to those 4 UEFI variables as the Secure Boot certificates, or simply the certificates.

The certificates are stored at several levels:

To install or modify the certificates on the pool, use the secureboot-certs command line utility. See Configure the Pool. Once secureboot-certs is called, the XAPI DB entry for the pool and all the hosts is populated with a base64-encoded tarball of the UEFI certificates. The certificates are still not installed on disk, they only exist in the XAPI DB.

When a UEFI VM starts:

# Configure the Pool

To download and install XCP-ng’s default certificates, see Install the Default UEFI Certificates.

For custom certificates (advanced use), see Install Custom UEFI Certificates

# Install the Default UEFI Certificates

secureboot-certs supports installing a default set of certificates across the pool.

Except the PK which is already present somewhere on the host’s disk, all certificates are downloaded from official sources ( microsoft.com and uefi.org ).

The default certificates are sourced (or generated) as follows: